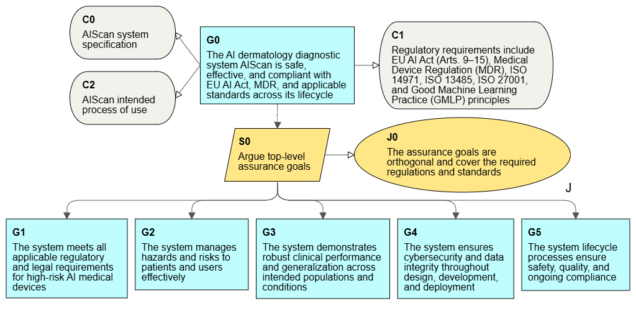

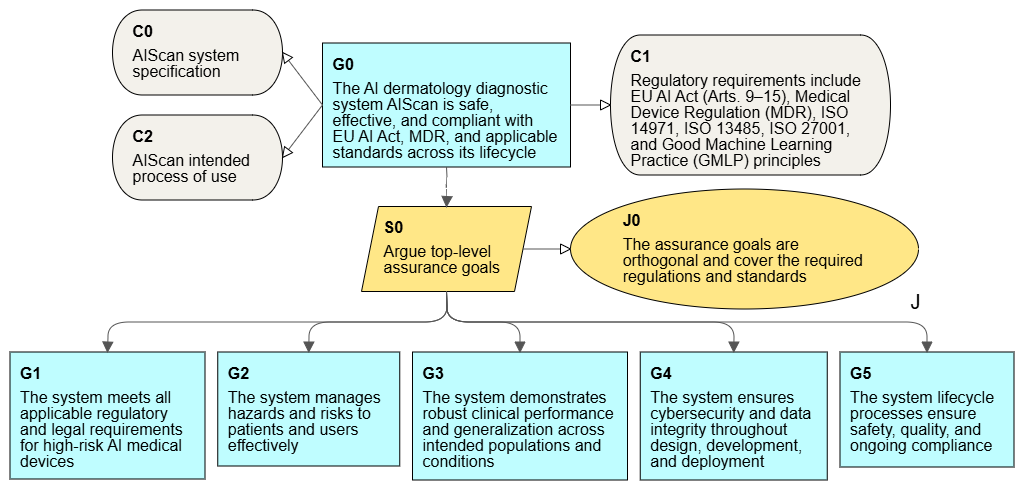

Certification of AI systems is a complex process that includes both AI regulations and industry regulations. In particular, the AI Act Regulation (EU 2024/1689) defines the concept of high-risk systems for which additional requirements have been defined. For such a complex process, the use of assurance case gives the opportunity to effectively manage the development of the system for its certification.

The AI Act defines high-risk systems a little differently than we are used to in the field of critical systems, because in addition to systems affecting human health and critical infrastructures, it includes also systems affecting fundamental rights in the EU, work, access to services, justice or democratic processes. The use of AI in a medical system, for example imaging diagnosis, is a typical example of high-risk systems within the meaning of the AI Act.It is a safety-critical system, subject to regulations and standards for the safety of medical devices.

Certification requirements for medical AI

To talk about the use of assurance cases for such systems, we need to start by defining the scope of formal requirements.

- Such a system meets the criteria of a high-risk system within the meaning of the AI Act, which also specifies the requirements for its certification of conformity before authorization for use.

- ISO 13485 is a basic requirement on the qualification side of medical systems. The standard specifies the requirements for a quality management system (QMS), which is also required by the AI Act.

- Another required industry standard is ISO 14971 for risk management for medical devices. Risk management is also required in the AI Act for high-risk systems.

- We must also not forget about the MDR (EU Medical Device Regulation 2017/745) for medical devices.

- Reviewing the AI Act, we will also find cybersecurity requirements, where there is no direct requirement to apply ISO 27001, but in this standard we will find the required security controls.

A more detailed analysis may identify more standards and regulations (such as GMPL), but the presented group provides a good basis for working on the AI system and preparing for its certification. Therefore, we have a fairly wide range of processes and documentation that are subject to evaluation for the approval of the system. There are several independent assessment points, including clinical credibility assessment, quality management system (QMS) audits, and finally, system compliance assessment for the AI Act and MDR.

System certification is not the end, but a transition point to the system operation phase. We then have post-market audits, especially after incidents. And more importantly for AI systems, for each new version, if the AI model is modified, recertification is required, unless certification involves continuous learning. All this together creates the need for strong management of the consistency of all documentation and control of changes and versioning. Assurance case can be the focal point of this work.

The main benefits of using an assurance case for such a system are, above all:

- Efficiency of the preparation process and the certification itself thanks to the systematic approach and transparency provided by the argumentation

- Risk reduction through the more efficient and earlier detection of vulnerabilities and non-conformities

- Uniform reporting for all areas of the system throughout the life of the system

- Easier interaction with auditors and the certification body

Efficiency of building assurance case versions for new versions of the system, especially when automating argumentation generation in the CI/CD pipeline.

Where to start?

The certification or qualification of systems often involves adhering to numerous regulations and standards. This is also the case with this system. The first decisions usually concern the organization of the assurance case process and the argumentation architecture. It is good when these activities start early, at the stage of the system concept. Argumentation templates, in particular those covering an integrated set of requirements for applicable regulations and standards, are a good starting point.

The scope of certification requirements includes safety requirements, but also requirements specific for AI technology. The main requirements relate to the accuracy and sensitivity of models for minimizing false positives and negatives, and clinical validation. This applies directly to the safety in medical sector, but there are also requirements that are specific to AI, for example, avoiding the bias or interpretability, i.e. the ability to explain decisions. A full decomposition of the requirements creates a complex argumentation template that can be adapted to a given system and its specifics.

The use of an assurance case gives a good level of process control at every stage and allows you to reduce the risk.